I'm a slow talker. If you were being kind, you might describe the pace of my pace as "pensive." If you were being accurate, you might describe it as "bumbling." As a result of my slowness, I say "uh," and especially "um" a lot. A whole lot. So much, in fact, that my son frequently demands out of frustration that I "stop saying um!" In the literature, such verbal fillers, and the related, "er," "I mean," "you know," and in children from the 80s and the Aughts, "like," are mercifully referred to as disfluencies, or even more mercifully as "performance additions." And over the last decade, psycholinguists (not a very merciful name) have found that they may actually play important roles in speech.

Traditionally, "uh" and "um" were thought to be involuntary products of a momentary difficulty in processing what one wants to say, or in deciding whether one actually wants to say it, and therefore are meaningless themselves. Alternatively, they were seen as merely a means of preventing people from interrupting during a pause in speaking. There's an obvious problem with this view, though. Why do we have more than one such marker of disfluency? In fact, English isn't the only language with more than one. Clark and Fox Tree1 found equivalents of both "uh" and "um" or similar fillers in multiple languages, including Japanese, Spanish, Norwiegan, Swedish, Dutch, French, German, and Hebrew. Distinct elements with no differences rarely survive in a language, much less several languages from different families, so there must be something to "uh" and "um."

Friday, June 21, 2013

Thursday, May 16, 2013

Does the Size of Your Arms Affect Your Politics?

It does if you're male, apparently. At least, so says a paper by Petersen et al1 titled "The ancestral logic of politics: Upper body strength regulates men’s assertion of self-interest over economic redistribution," in press in Psychological Science (you can read the full manuscript here). Their methodology was pretty simple: for male and female participants in Argentina, Denmark, and the U.S., they measured the "circumference of the flexed bicep of the dominant arm," a strong indicator of upper body strength, and then correlated those measurements with their answers to several questions that measured support for economic redistribution policies.

For women, there was no relationship between arm size and support for redistribution. For men, however, there was a statistically significant relationship, even controlling for political ideology. In other words, regardless of whether you considered yourself liberal or conservative, your arm size was a good predictor of your support for economic redistribution. The relationship wasn't linear, though. For men of (self-reported) below average socioeconomic status (SES), support for redistribution increased with upper body strength, while for men of above average SES, it decreased that support. You can see that in the charts below (from Petersen et al's Figure 1, representing marginal effects from their Ordinary Least Squares model).

What does this mean? Here's how Petersen et al put it:

1 Petersen, M. B, Sznycer, D., Sell, A., Cosmides, L., & Tooby, J. (in press). The ancestral logic of politics: Upper body strength regulates men’s assertion of self-interest over economic redistribution. Psychological Science.

For women, there was no relationship between arm size and support for redistribution. For men, however, there was a statistically significant relationship, even controlling for political ideology. In other words, regardless of whether you considered yourself liberal or conservative, your arm size was a good predictor of your support for economic redistribution. The relationship wasn't linear, though. For men of (self-reported) below average socioeconomic status (SES), support for redistribution increased with upper body strength, while for men of above average SES, it decreased that support. You can see that in the charts below (from Petersen et al's Figure 1, representing marginal effects from their Ordinary Least Squares model).

The asymmetric war of attrition model of animal conflict predicts that animals use advantages in fighting ability to bargain for increased access to resources. Equally, it predicts that attempts to self-interestedly increase resource share should not be initiated when at a competitive disadvantage. The findings reported here show that this model generalizes to humans, successfully predicting the distribution of support for, and opposition to, redistribution in three different nations.I have to admit that the "asymmetric war of attrition model" sounds pretty cool, but also somewhat vague, particularly when it comes to mechanisms, a fact that Petersen et al. admit when they write, "the findings of this study are silent with regards to the precise proximate variables that mediate between upper body strength and psychological traits." Whatever gets males from "asymmetric war of attrition" to arm size mediating political views, though, this provides yet another piece of evidence that our politics are less well thought out than we like to think. I mean, it's not like our arms predict better or worse political reasoning, right? Particularly since the effect of arm size on support for redistribution is dependent on one's SES (suggesting that the key here is self-interest) and gender, and is independent of political ideology.

1 Petersen, M. B, Sznycer, D., Sell, A., Cosmides, L., & Tooby, J. (in press). The ancestral logic of politics: Upper body strength regulates men’s assertion of self-interest over economic redistribution. Psychological Science.

Tuesday, May 14, 2013

Cool Visual Illusions: Flashed Face Distortion Effect

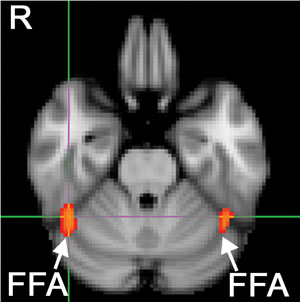

As a highly social species, recognizing faces, and the expressions on them, is pretty important to us. It's so important, in fact, that our visual systems have areas primarily devoted to faces (e.g., the fusiform face area, shown in the picture on the left). As a result, we're pretty good at it. Researchers are still trying to figure out exactly how we do it well, but that we do it well, and that our brains treat faces as special, is certain.

As a highly social species, recognizing faces, and the expressions on them, is pretty important to us. It's so important, in fact, that our visual systems have areas primarily devoted to faces (e.g., the fusiform face area, shown in the picture on the left). As a result, we're pretty good at it. Researchers are still trying to figure out exactly how we do it well, but that we do it well, and that our brains treat faces as special, is certain.One thing researchers do know is that features like the nose, eyes, and mouth, and their configuration (their size, position, and relationship to each other) are important. A recently (and accidentally) discovered facial illusion makes the importance of features clear:

I recommend watching the video twice. The first time, follow the instructions at the beginning and keep your eyes on the cross in the center. Then watch it again, looking at one or the other of the faces directly. Freaky, right?

The discoverers of this illusion, Tangen et al. (you can read their paper here, or their short post on the illusion here), suggest that's what happening here is that, when the faces begin to scroll, we process the features of one face relative to the features of the next face, which causes both our perception of the features themselves and their configuration to become distorted. In a sense, our brains don't realize that the different features belong to different faces, so they create horribly distorted faces as a result.

Oh, and they also have a celebrity version:

Oh, and they also have a celebrity version:

Wednesday, May 8, 2013

Infants Totally Not Fooled By Robots

Lately I've been worrying about potential robot influence on babies during the coming human-robot global conflict, but a journal alert popped up in my inbox today that has allayed my fears :

Infants understand the referential nature of human gaze but not robot gaze

Abstract: Infants can acquire much information by following the gaze direction of others. This type of social learning is underpinned by the ability to understand the relationship between gaze direction and a referent object (i.e., the referential nature of gaze). However, it is unknown whether human gaze is a privileged cue for information that infants use. Comparing human gaze with nonhuman (robot) gaze, we investigated whether infants’ understanding of the referential nature of looking is restricted to human gaze. In the current study, we developed a novel task that measured by eye-tracking infants’ anticipation of an object from observing an agent’s gaze shift. Results revealed that although 10- and 12-month-olds followed the gaze direction of both a human and a robot, only 12-month-olds predicted the appearance of objects from referential gaze information when the agent was the human. Such a prediction for objects reflects an understanding of referential gaze. Our study demonstrates that by 12 months of age, infants hold referential expectations specifically from the gaze shift of humans. These specific expectations from human gaze may enable infants to acquire various information that others convey in social learning and social interaction.Infants develop the ability to follow the gaze of adults around 9-11 months of age, and it's not a coincidence that soon after that they start to speak their first words. Being able to tell what an adult is looking at is a key component of figuring out what the hell those strange sounds the adult is emitting might mean. This study suggests that by 12 months of age, humans are not only able to tell that adults are looking at something intentionally, but they reserve this inference for humans specifically.

Tuesday, May 7, 2013

It's Gotta Be the Shoes!

I remeber that when I, however rarely, got the "hot hand" in a game, my basketball coach would yell "it's gotta be the shoes!" He knew I had the hot hand, and he'd tell my teammates to get me the ball, but he couldn't attribute the hotness to me, so he joked it must be the shoes.

The truth, of course, is that I didn't have the hot hand, and neither did my shoes. That's because there's no such thing as the "hot hand." In a classic paper, Gillovich et al.1 showed this by looking at every shot taken by the 1980-81 Philadelphia 76ers in their 48 home games (including the playoffs). They found that making a shot didn't increase the probability of making the next shot. In fact, it decreased that probability slightly. In other words, Dr J was slightly less likely to make a shot if he'd made his last shot! Furthermore, looking at the "runs" (streaks of misses or makes) showed no evidence of a grouping of makes or misses above what you would expect by chance.

Why does pretty much everyone believe in the hot hand if, in fact, it doesn't exist? Well, because our brains are not very good at figuring out probabilities or perceiving randomness, and they're primed to see patterns where they don't exist, so all it takes is a small run to make us think something good is going on. Or as Gillovich et al. put it (p. 311-312):

Evidently, people tend to perceive chance shooting as streak shooting, and they expect sequences exemplifying chance shooting to contain many more alternations than would actually be produced by a random (chance) process. Thus, people "see" a positive serial correlation in independent sequences, and they fail to detect a negative serial correlation in alternating sequences. Hence, people not only perceive random sequences as positively correlated, they also perceive negatively correlated sequences as random... We attribute this phenomenon to a general misconception of the laws of chance associated with the belief that sall as well as large sequences are representative of their generating process. This belief induces the expectation that random sequences should be far more balanced than they are, and the erroneous perception of a positive correlation between successive shots.More recently, Yigal Attali, in a paper in press, shows that even people who spend their entire lives around basketball can't get past the "hot hand" belief. Here is the abstract:

Although “hot hands” in basketball are illusory, the belief in them is so robust that it not only has sparked many debates but may also affect the behavior of players and coaches. On the basis of an entire National Basketball Association season’s worth of data, the research reported here shows that even a single successful shot suffices to increase a player’s likelihood of taking the next team shot, increase the average distance from which this next shot is taken, decrease the probability that this next shot is successful, and decrease the probability that the coach will replace the player.So the belief in the hot hand causes players to not only be more likely to take the next shot, but to take more difficult shots just because they made their last shot, even though they're more likely to miss that second shot. Despite this, coaches are so convinced of the hot hand effect that they're less likely to punish players for the resulting poor decisions. Again, what's amazing about this is that these people are experts in basketball, and have witnessed many thousands of in-game combinations of shots, but they're still unable to perceive that making one shot doesn't make it more likely that the same player will make the next shot, especially if that next shot is an even more difficult one.

I blame the shoes.

1 Gilovich, T., Vallone, R., and Tversky, A. (1985). The hot hand in basketball: On the misperception of random sequences. Cognitive Psychology, 17, 295-314.

Monday, May 6, 2013

Penrose Triangle Meets Kanizsa's Triangle

Everyone knows Penrose triangle (image from here). It is one of many impossible objects that result from our visual system interpreting a possible 2-dimensional figure as a 3-dimensional impossible one. Last week I talked about Kanizsas triangle, an illusion in which our brains are tricked into perceiving contours where none exist. Christopher Tyler of the Smith-Kettlewell Institute has produced an image that combines the two.

To do so, he took a basic, though pretty cool looking version of Kanizsa's triangle (Tyler's original image with the white lines removed):

And added 3 white lines, to produce this (his original image):

The white lines produce a depth effect which makes the triangle appear 3D, and therefore impossible, as in the Penrose triangle. It took me a minute to get the effect, so look again if you don't see it the first time. I find it's best if you look just off center (e.g., at the center of the top left circle).

This illusion was one of the finalists in the 2011 Illusion of the Year contest (you can see a larger version of the image there).

To do so, he took a basic, though pretty cool looking version of Kanizsa's triangle (Tyler's original image with the white lines removed):

And added 3 white lines, to produce this (his original image):

The white lines produce a depth effect which makes the triangle appear 3D, and therefore impossible, as in the Penrose triangle. It took me a minute to get the effect, so look again if you don't see it the first time. I find it's best if you look just off center (e.g., at the center of the top left circle).

This illusion was one of the finalists in the 2011 Illusion of the Year contest (you can see a larger version of the image there).

Friday, May 3, 2013

Violent Video Games as an Outlet For Frustrated Desires to Do Bad Things

There is a cool pair of studies by Whitaker et al in the April issue of Psychological Science suggests that frustration at the inability to do something bad may make violent video games more attractive. Here is the abstract:

Amusingly, the participants in the stealing study who were given the opportunity to steal actually did steal money. Those who were given the opportunity to steal and then had the opportunity taken away managed to get an average of 35 cents before the experimenter starting watching them, while those who had the opportunity to steal throughout the whole study got away with 75 cents on average. Ah, college undergrads.

Although people typically avoid engaging in antisocial or taboo behaviors, such as cheating and stealing, they may succumb in order to maximize their personal benefit. Moreover, they may be frustrated when the chance to commit a taboo behavior is withdrawn. The present study tested whether the desire to commit a taboo behavior, and the frustration from being denied such an opportunity, increases attraction to violent video games. Playing violent games allegedly offers an outlet for aggression prompted by frustration. In two experiments, some participants had no chance to commit a taboo behavior (cheating in Experiment 1, stealing in Experiment 2), others had a chance to commit a taboo behavior, and others had a withdrawn chance to commit a taboo behavior. Those in the latter group were most attracted to violent video games. Withdrawing the chance for participants to commit a taboo behavior increased their frustration, which in turn increased their attraction to violent video games.In two studies, then, participants who were given the opportunity to do something bad -- cheat on an exam in the first, and steal money in the second -- found violent video games more attractive, and the level of attractiveness for these games was closely related to their self-reported level of frustration. So, next time you want to rob a bank, but find yourself unable to do so, play Battlefield 3.

Amusingly, the participants in the stealing study who were given the opportunity to steal actually did steal money. Those who were given the opportunity to steal and then had the opportunity taken away managed to get an average of 35 cents before the experimenter starting watching them, while those who had the opportunity to steal throughout the whole study got away with 75 cents on average. Ah, college undergrads.

Wednesday, May 1, 2013

When Monkeys Conform

There's an interesting article on Science Daily titled 'When in Rome': Monkeys Found to Conform to Social Norms' reporting on research by Erica van de Waal, Andrew Whiten, and Christèle Borgeaud. From the article:

The research was carried out by observing wild vervet monkeys in South Africa. The researchers originally set out to test how strongly wild vervet monkey infants are influenced by their mothers' habits.and

But more interestingly, they found that adult males migrating to new groups conformed quickly to the social norms of their new neighbours, whether it made sense to them or not.

In the initial study, the researchers provided each of two groups of wild monkeys with a box of maize corn dyed pink and another dyed blue. The blue corn was made to taste repulsive and the monkeys soon learned to eat only pink corn. Two other groups were trained in this way to eat only blue corn.Cultural transmission like this is thought to be a big reason why humans are so much more advanced, mentally, than primates (e.g.) . Observing even a little bit of it in primates is a pretty big deal, then.

A new generation of infants were later offered both colours of food -- neither tasting badly -- and the adult monkeys present appeared to remember which colour they had previously preferred.

Almost every infant copied the rest of the group, eating only the one preferred colour of corn.

The crucial discovery came when males began to migrate between groups during the mating season.

The researchers found that of the ten males who moved to groups eating a different coloured corn to the one they were used to, all but one switched to the new local norm immediately.

Tuesday, April 30, 2013

Kanizsa's Triangle: Illusory Contours and the Inferring of the Perceptual World

Since I said a little bit about the title of the blog, I figure I'd say a bit about the image in the banner as well. It's a "Rubin Vase," a "bi-stable" image (sort of like a necker cube, a duck rabbit, or the "Old Woman-Young Woman") in which our brains alternate which part is the figure, and which part is the ground, so that at times it appears as a vace, and at times it appears as two faces, (usually) without us seeing both at the same time. Now, since I'm lazy, and since it's unlikely anyone's reading this anyway, I'm not going to talk about the Rubin's Vase, because to explain it would require a detailed explanation of figure-ground processing, as well as facial recognition (our brains have special areas just for faces, and it turns out the Rubin Vase activates those areas). That's just too much work. But I included the image in the banner because I dig visual illusions, so instead I'll talk about a (comparatively) simpler one, and maybe someday get to Rubin's.

Since I said a little bit about the title of the blog, I figure I'd say a bit about the image in the banner as well. It's a "Rubin Vase," a "bi-stable" image (sort of like a necker cube, a duck rabbit, or the "Old Woman-Young Woman") in which our brains alternate which part is the figure, and which part is the ground, so that at times it appears as a vace, and at times it appears as two faces, (usually) without us seeing both at the same time. Now, since I'm lazy, and since it's unlikely anyone's reading this anyway, I'm not going to talk about the Rubin's Vase, because to explain it would require a detailed explanation of figure-ground processing, as well as facial recognition (our brains have special areas just for faces, and it turns out the Rubin Vase activates those areas). That's just too much work. But I included the image in the banner because I dig visual illusions, so instead I'll talk about a (comparatively) simpler one, and maybe someday get to Rubin's.My thing, my groove you might say, in the study of the mind is all about the ways in which our brains build our world, and how little “we”, by which I mean our conscious selves, have any say in it. At higher, cognitive levels, heuristics and biases, unconscious processing, and “hot” reasoning – that is, affect or emotion-driven reasoning – do the bulk of the work in ways that we're all familiar with (if you don't know what I'm talking about, get into an argument on the internet and watch these biases, “hot” processes, and all the other unconscious ways in which we build the world play out in virtual time). At a lower level, though, visual illusions reveal even more interesting, and perhaps frightening, ways in which our brains build our perceptual world. They show that we don't simply take in reality and represent it as it is out there, independent of us. Instead, we meet it with a bunch of built in assumptions and rules of inference that, when conditions are right, cause us to mis-see things out there.

Monday, April 29, 2013

About the Blog's Title

This blog is going to be (mostly) about cognitive psychology, so I wanted a title that was a nod to something, or someone, important to the field. After a bit of deliberation, I decided Hermann Ebbinghaus was a pretty good candidate, but Ebbinghaus is kind of a weird blog title, so I went with his most famous finding, the forgetting curve. You can easily find short descriptions of Ebbinghaus’ life and work online, but I thought I’d say a little bit about why he’s important. By 1885, when Ebbinghaus published his classic work usually translated into English as Memory: A Contribution to Experimental Psychology (which you can read in its entirety here), the study of the human mind or soul was already a couple millennia old at least, but to that point had primarily been studied via reasoning from introspection (even Wundt, generally considered the father of modern psychology, used an introspective method). For some time, psychophysical phenomena such as vision and audition, had been studied using the methods of natural science, because these phenomena were thought to fall under the purview of biology. Higher-order mental phenomena like memory or reasoning, on the other hand, were, if not of a different metaphysical sort than the biological, at least not amenable to the same methods of investigation. Ebbinghaus (and others) thought otherwise, and he set about to both demonstrate that the scientific method could be applied to cognition, and to learn something about memory in the process. So he says in the preface:

In the realm of mental phenomena, experiment and measurement have hitherto been chiefly limited in application to sense perception and to the time relations of mental processes. By means of the following investigations we have tried to go a step farther into the workings of the mind and to submit to an experimental and quantitative treatment the manifestations of memory.So using only one experimental subject, himself, Ebbinghaus set about memorizing lists of nonsense syllables and, to determine how memory for familiar and meaningful syllables might differ, six stanzas of Byron’s Don Juan. He explored memory from several different perspectives, looking at how quickly syllables of different length are learned, how his memory for the syllables was affected by how long he studied them (SPOILER: studying them longer makes you remember more for a longer period of time), how repeatedly learning the lists affected memory, and how the order of the syllables influenced memory. The forgetting curve, however, comes from his exploration of the effect of time on retention.

Here’s what he did, in short: he first learned the syllables, or stanzas, until he could repeat them all in order perfectly. Then he’d wait for some period of time and relearn them. He measured his retention by comparing the number of times it took him to relearn the list perfectly to the number of times it had originally taken him to learn it. The fewer times it took him, the more work his retention of the list had saved him, so he called the measure “savings.” If he was able to recall the list perfectly on his first try after the delay, retention was 100%. If it had taken him 10 times to learn the list the first time, and he recalled it in 2 tries the second time, then retention was 80%. If it took him 8 tries, retention was 20%. And so on (the complete numbers are in the table at the top, taken from his book).

The results were pretty simple: between the initial learning and a test of savings a short time later, there was large drop in retention, from the 100% after the list had initially been learned perfectly to 58.2% after just 20 minutes. After that, the reduction in savings became smaller with each interval, so that the difference between savings after 3 days (25.4%) and 6 days (21.1%) was much smaller than the difference between the initial learning and 20 minutes. This pattern gets us this graph (via, who apparently got it from Purdue University):

And that is what the basic Ebbinghaus forgetting curve looks like. It seems simple now, and pretty obvious too, but remember this was produced in a time when quantitatively measuring memory was thought by many to be impossible. This was, however seemingly mundane to us, revolutionary to the psychologist of 1885. Cognitive psychology has come a long way since then (for one, we don’t use ourselves as subjects, and we almost always use more than one), but these are the field’s not so humble beginnings. And so in lieu of naming the blog Ebbinghaus, honoring the discipline’s father with the name of his most famous finding seems appropriate to me.

Sunday, April 28, 2013

Let's see if this takes

So, I've been away from blogging for... a while now, which means that I'm completely out of the habit and this probably won't take, but what the hell, right? Let's see if I can at least post semi-regularly. I'll try, and I mean try, to post something long form(ish) on cognitive science or a related field every Thursday, and the rest of the week will be filled either with nothing or little tid bits that may or may not have anything to do with cognitive science. Now, I'm a procrastinator by nature, so when I say "Thursday," I really mean Thursday, or Friday, or if I'm feeling extra-motivated and under-busy, Wednesday, or maybe even Tuesday of next week. So Thursday it is.

Subscribe to:

Posts (Atom)